- What is an AI data centers are really doing and why are they taking some much energy?

- The bottlenecks of AI demands and data centers.

- The solutions on earth and space.

- The importance of data centers.

The AI Factory: What Data Centers Are Really Doing

Every breakthrough in AI—from the intelligent agents guiding humanoid robots to the complex algorithms driving generative AI—is powered by a single, colossal engine: the AI Data Center.

If the silicon chip is the brain of the AI revolution, the data center is the heart and lungs—a specialized, high-density computing facility built for one purpose: to handle the immense demands of machine learning and deep learning.

.webp)

From Storage to Factory

Forget the image of a simple room storing data. According to Jensen Huang, CEO of NVIDIA, these are no longer the data centers of the past. He calls them "AI Factories."

"AI is now infrastructure, and this infrastructure, just like the internet, just like electricity, needs factories. They're not data centers of the past. They are, in fact, AI Factories. You apply energy to it, and it produces something incredibly valuable called tokens."

- Jensen Huang, CEO of NVIDIA

Their job is two-fold:

- Training: To feed vast datasets into neural networks, teaching them to think and generate, a process that requires massive, parallel power from specialized chips like GPUs (Graphics Processing Units).

- Inference: To run those trained models in real time, making instant decisions-the moment a robot recognizes an object or an AI chat platform delivers a coherent reply.

The Bottlenecks: A Triad of Constraints

The exponential growth in AI complexity is now bumping up against the limits of physics and Earth's existing infrastructure. The struggle boils down to three core problems: Power, Heat, and Connectivity.

- The Thermal Inferno: The Energy Black Hole

The shift to dense clusters of GPUs has sent power demands soaring. This is the root of the heat problem. While a traditional server rack might draw 8–10 kW of power, a modern AI rack packed with powerful accelerators can consume 40 kW to over 120 kW—up to 15 times the traditional limit. A single rack of high-end NVIDIA H100 GPUs alone can generate over 2,800 Watts of heat.

This extreme concentration of energy demands is putting immense pressure on electrical grids, leading to multi-year delays for new connections.

“What the world lacks now is not money, but electricity and data centers for AI.”

- Elon Musk, Founder of Tesla and XAI

- The Network Barrier: The Data Traffic Jam

Even if you manage the power and heat, there’s a new challenge: getting the chips to talk to each other fast enough. Training a large AI model requires thousands of GPUs to constantly synchronize data. This creates massive amounts of internal, server-to-server traffic known as "East-West" traffic.

The problem is speed. Up to 50% of an AI job's total time can be spent waiting for data across the network. To keep pace, the required network speed has jumped from the typical 10–100 Gbps to the necessary 400 Gbps to 1.6 Tbps (Terabits per second) per node. Scaling GPUs without scaling the network is like "building a multi-lane racetrack but only opening one lane." The network is the defining bottleneck that prevents expensive compute power from being fully utilized.

Immediate Solutions: Building Smarter on Earth

The industry is reacting with rapid, extreme engineering solutions to stretch Earth's capacity.

Liquid Cooling: Beyond Air

Since air is too poor a coolant, it simply cannot handle loads above 35 kW per rack, the industry is embracing Liquid Cooling. This moves beyond the inefficiencies of air conditioning into two main methods:

- Direct-to-Chip Cooling: Liquid is channeled through plates mounted directly onto the hot components.

- Immersion Cooling: Servers are completely submerged in tanks. The liquid used for this breakthrough is called a dielectric fluid. These are specialized compounds, such as certain synthetic hydrocarbons or fluorochemicals, that are non-electrically conductive (they won't cause a short circuit) but are incredibly effective at absorbing and transferring heat. This can cut a facility's cooling energy use by up to 90%, significantly improving overall efficiency.

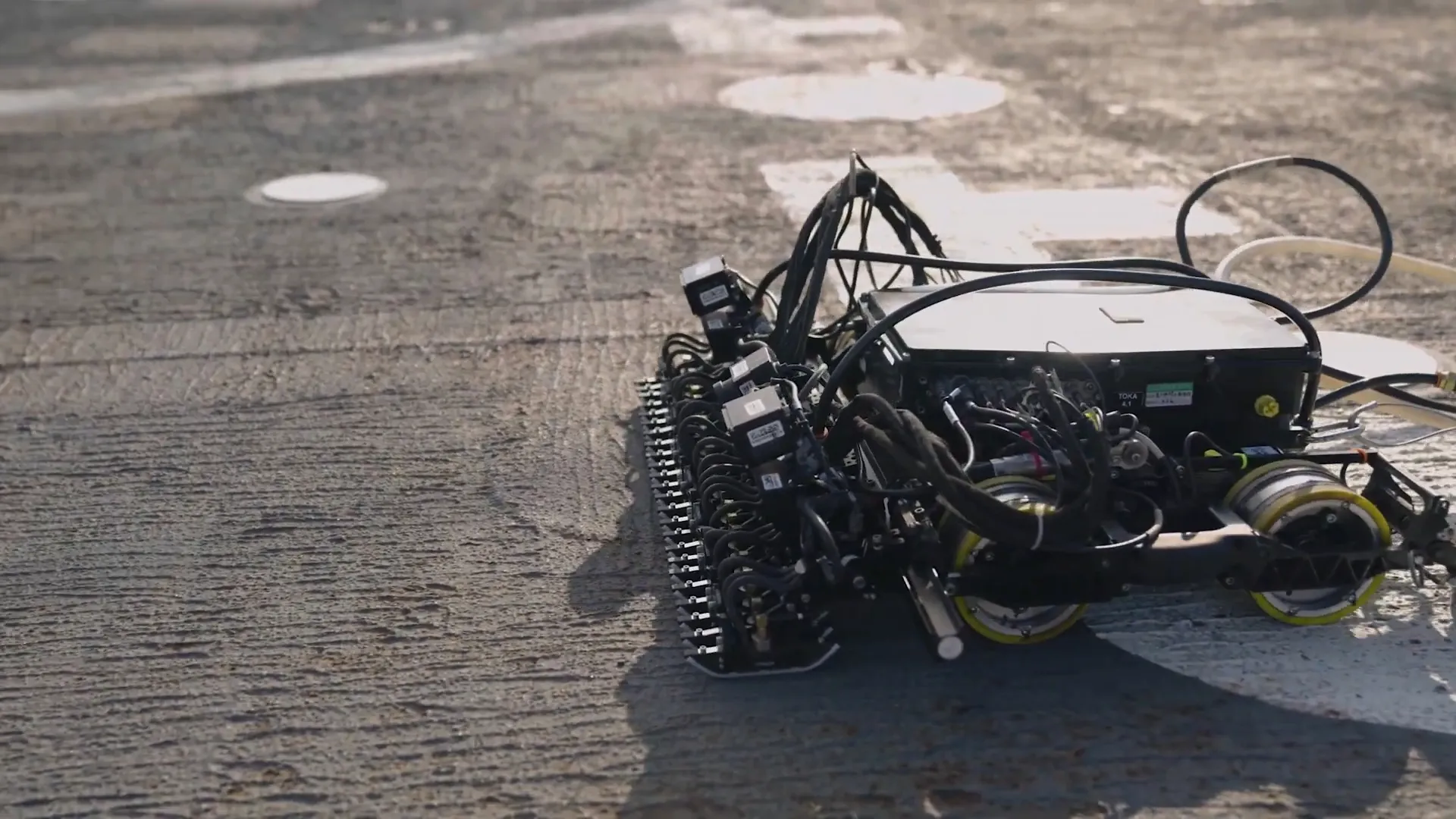

The Underwater Solution: China's UDCs

Some countries are looking to the largest heat sink available: the ocean.

China, particularly in Hainan Province, is pioneering commercial Underwater Data Centers (UDCs). Servers are sealed in watertight, pressurized capsules and submerged on the seabed. The cold surrounding seawater is then used as a natural, passive heat exchanger, eliminating the need for expensive fans and chillers.

While this can cut cooling energy to less than 10% of total consumption, it has sparked intense debates about environmental impact (local heat discharge), maintenance complexity, and even cyber-security risks.

Pioneers of the Frontier: Launching the AI Factory into Orbit

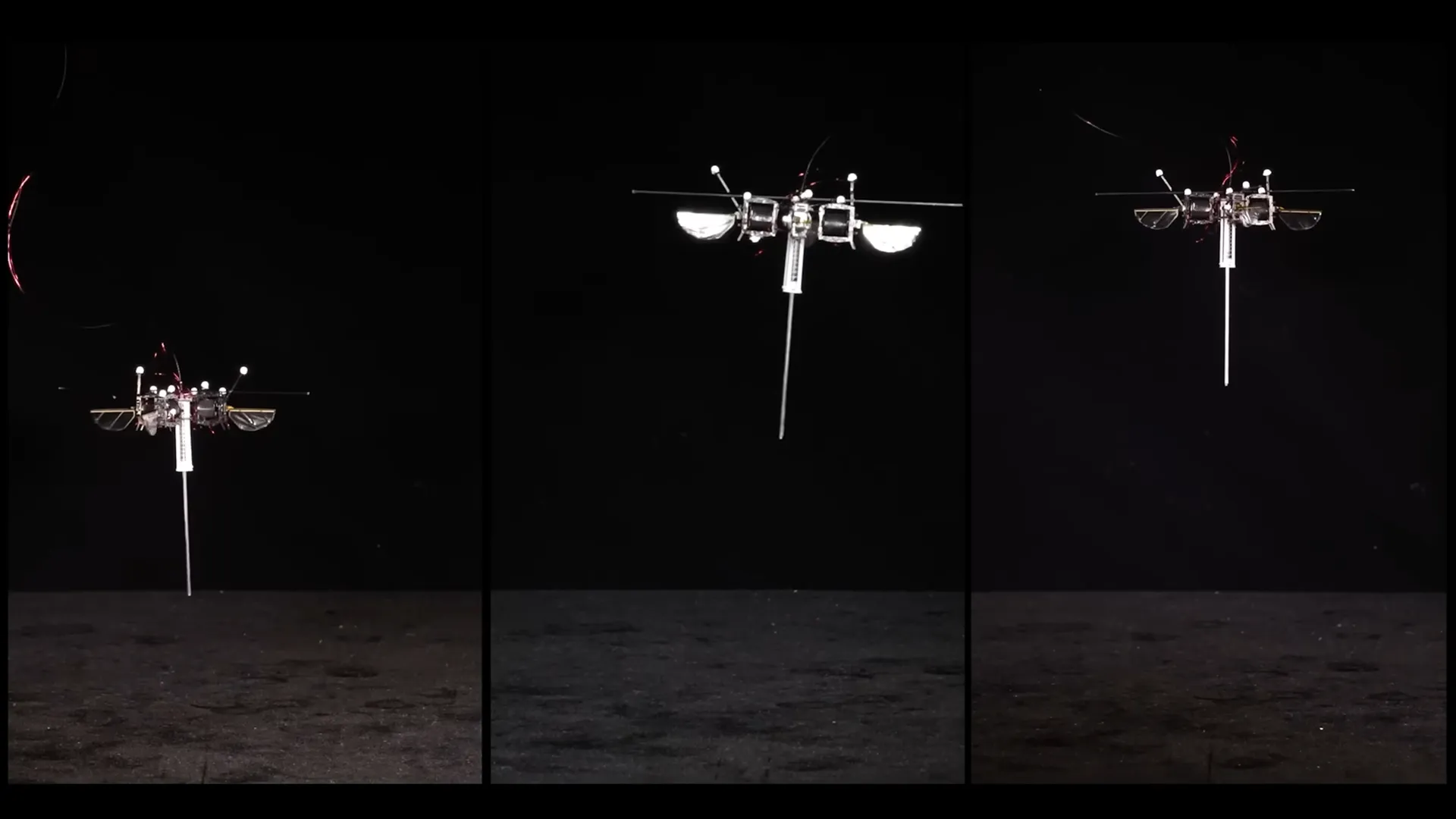

The ultimate solution to overcoming the power and cooling constraints is to leave the planet entirely. The cost of running AI on Earth is becoming so high that it is now economically viable to consider building data centers in space.

One notable pioneer is Starcloud, a Y Combinator-backed startup aiming to move high-performance computing to Low Earth Orbit (LEO).

In orbit, they solve both primary bottlenecks

- Unlimited Solar Energy: Continuous, abundant sunlight for 24/7 power.

- Free Cooling: The vacuum of space acts as an infinite heat sink, allowing waste heat to be rejected purely through radiative cooling, without using a drop of water or a single fan.

The Prototype Mission (Starcloud-1)

Starcloud successfully launched a refrigerator-sized satellite carrying an NVIDIA H100 GPU into orbit. This mission was a historic proof of concept, demonstrating that powerful, commercial-grade AI chips could operate effectively in space. The goal of this test was to validate the proprietary thermal management system and test workloads like fine-tuning AI models and processing data in orbit.

The Commercial Satellite (Starcloud-2)

The next step is the launch of Starcloud-2, the company's first commercial satellite, scheduled to be fully operational in a sun-synchronous orbit by 2026. This satellite will scale up the capacity significantly and is being built in partnership with cloud providers like Crusoe Cloud.

Starcloud-2’s Key Features:

- GPU Cluster: Features a full GPU cluster, persistent storage, and next-generation NVIDIA accelerators (such as the Blackwell platform).

- Mission: It functions as a true sovereign cloud computing platform, fully independent of Earth.

- Dual Use: It not only provides AI computing power for Earth-based clients but also enables real-time data analysis of Earth observation data generated by other satellites. This eliminates the need to downlink massive terabytes of raw data, delivering instant insights and solving the data bandwidth bottleneck between space and Earth.

"My estimate is that the cost of electricity, the cost effectiveness of AI in space will be overwhelmingly better than AI on the ground...the lowest cost way to do AI compute will be with solar-powered AI satellites."

- Elon Musk, Founder of Tesla and XAI

Conclusion

The AI revolution is not just battle fought in software; it is a profound conflict against the physical limitations of our planet. The AI Factory will be the engine powering the next generation of LLMs, intelligent agents, and sophisticated humanoid robotics has simply outgrown our current infrastructure.

The core challenge remains a fundamental war against thermodynamics: how to power thousands of high-density GPUs without melting down the machine or overloading the grid. The industry's response has been nothing short of extraordinary.

We are seeing a dual-pronged approach to scaling:

- Mastering the Micro:

Innovating on the smallest by adopting liquid cooling, submerging entire racks in efficient dielectric fluids, and perfecting the physics of heat transfer to keep compute dense and efficient. - Conquering the Macro:

Escaping terrestrial constraints by building submerged facilities like China’s Underwater Data Centers (UDCs), or boldly launching orbital factories like Starcloud-2 into space to harness unlimited solar power and passive cooling.

The future of Artificial Intelligence—and the continued advancement of robotics—hinges entirely on the speed at which engineers can break these barriers. The energy and connectivity bottlenecks are the last great physical hurdles before the digital age can fully realize its promise. The race is on, and the engine room of tomorrow’s AI may well be cooled by the deep ocean or powered by the endless sun.